Test Automation

Test Automation provides a way for you to define tests to be executed either as part of a deploy workflow, or to overtly choosing to execute tests on their own. To determine the tests to be run, the framework will look at the strategy (see the Strategy below). This framework is very flexible and allows you to map the tests to be run based on environment, instance, project stream, etc.

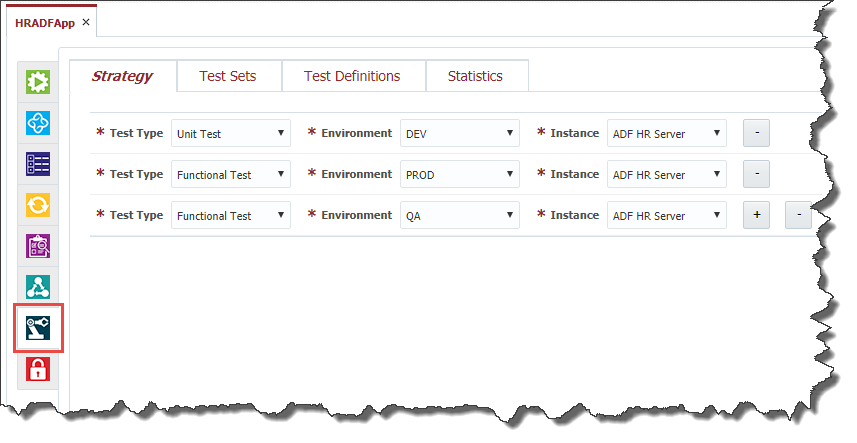

To configure the test to be run for a project click on the Test Automation tab.

Test Automation Strategy

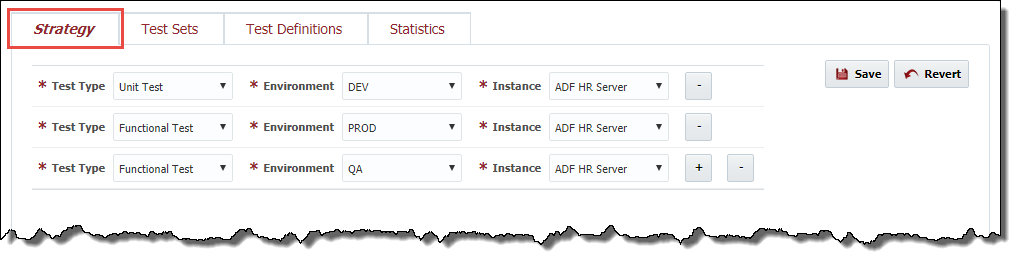

Test automation strategy defines test types that can be performed for a project at each environment and instance where the project is deployed. Usually QA engineers set up different instances of the application for performing different test types. For example, one instance is good enough for functional testing, providing a lot of available functionality, but it may not be suitable for performance testing. There is a separate instance with huge amount of data for performing testing To set up a new combination of the test type, environment and instance click the plus button and select values from the drop down lists. To delete a combination click the minus button .

To save test automation strategy for the project click the Save button. To cancel the changes click the Revert button.

Test Definitions

FlexDeploy introduces a notion of Test Definition. This abstraction represents one or more test cases related to some business use case. For example, for a banking system there can be defined test definitions such as "Loan arrangement", "Loan repayment", "Loan overdue", etc. When FlexDeploy is running automated tests it is, actually, running test definitions one-by-one, and each test definition, in its turn, is running the actual test cases with a corresponding testing tool. It "knows" what testing tool is going to be used, how to interact with it, what test cases (defined at the testing tool) should be used, how to import test results and how to qualify them. In order to interact with a testing tool a test definition uses a workflow.

Viewing Test Definitions

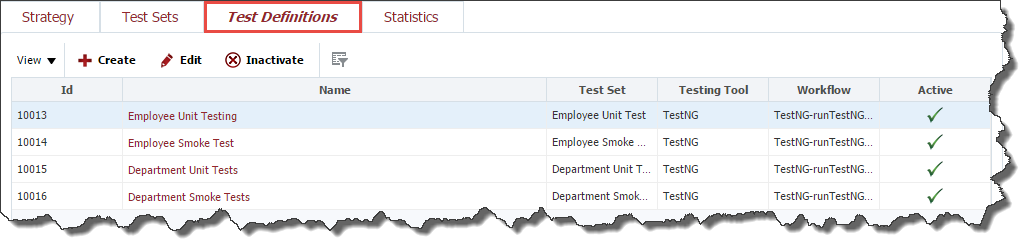

To view the list of test definitions defined for the project, select Test Definitions tab.

Creating a Test Definition

To create a test definition click the Create button.

At the General step of the wizard enter values for the following fields:

Field Name | Required | Description |

|---|---|---|

Name | Yes | Name for the test definition. |

Test Set | Yes | Select Test Set for which the test definition belongs. |

Testing Tool | Yes | Select Testing Tool which should be used to run tests |

Testing Instance | No | Enter Select Testing Instance where the testing tool is installed. This can be useful for testing tools like OATS, Selenium, SoapUI, etc. These tools usually have their own standalone installations. For testing tools like JUnit or TestNG this filed should be empty, which means that the testing tool is installed and running on each environment/instance where the project is deployed. |

Active | Yes | A flag indicating whether this test definition is active. Defaults to "Yes". |

Click the Next button in order to specify a workflow for test executions.

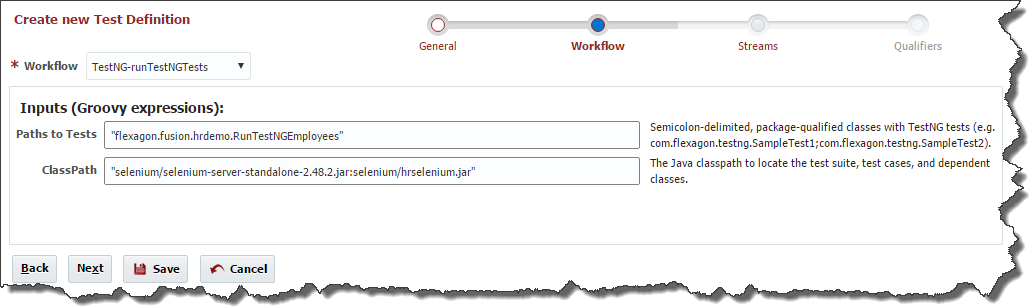

At the Workflow step of the wizard select a workflow which is going to be used to run tests. Note, that only a workflow of Test Definition type can be selected here. Enter values for any inputs that are configured for the workflow.

You will need to select appropriate workflow for test executions. There are many workflows available out of box for supported test tools, but you can define your own test tool and workflow as well. Depending on workflow you select, you may have a few inputs to configure.

- Inputs are provided as Groovy expression where you can use various property keys. Here you can use all the available properties (plugin or workflow).

- Note that endpoint execution related (various directory like FD_TEMP_DIR, FD_ARTIFACTS_DIR) variables can not be used here.

- As inputs are Groovy expressions, all literals must be enclosed in quotes.

- If property is defined for current deploy instance (not test instance) then you do not have to qualify it.

- For example, "-P EndPoint="+FLEXDEPLOY_SERVER_HOSTNAME +":" + FLEXDEPLOY_SERVER_PORT + " -P Database=" + FDJDBC_URL

- In this example, FLEXDEPLOY_SERVER_HOSTNAME, FLEXDEPLOY_SERVER_PORT, FDJDBC_URL are defined on current deployment instance and hence we did not have to use Instance code prefix. But if you used TOMCAT_FDJDBC_URL it will work as well.

- If you are trying to use an encrypted property like a password then it may not be available here. In such situations, you can use replacement variables in your test files or command line arguments. Whenever you use property replacement or command line variables, they must be qualified using deploy instance code.

- For example, in case of SoapUI, you can pass arguments like this to use password property (where TOMCAT is the instance code). "-P EndPoint="+FLEXDEPLOY_SERVER_HOSTNAME +":" + FLEXDEPLOY_SERVER_PORT + " -P Database=" + FDJDBC_URL + " -p\$TOMCAT_FD_JDBC_PASSWORD". Here we use \$ to make sure that this does not get evaluated in Groovy and is passed as environment variable to underlying command line call.

- In this example, we are passing Unix environment variable syntax (assuming running on Unix) for password, and we need to qualify using instance code in such cases.

Click the Next button in order to configure the Streams for the test definition.

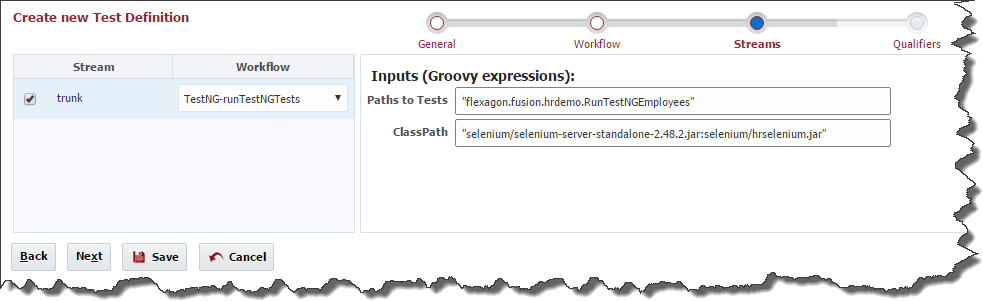

At the Streams step of the wizard select project streams for which the test definition is suitable. By default, all project streams are selected. It is possible to override workflow input values for each stream. It is also possible to change a workflow which is going to be used to run tests for each project stream. By default, all project streams will use the workflow and input values defined at the Workflow step.

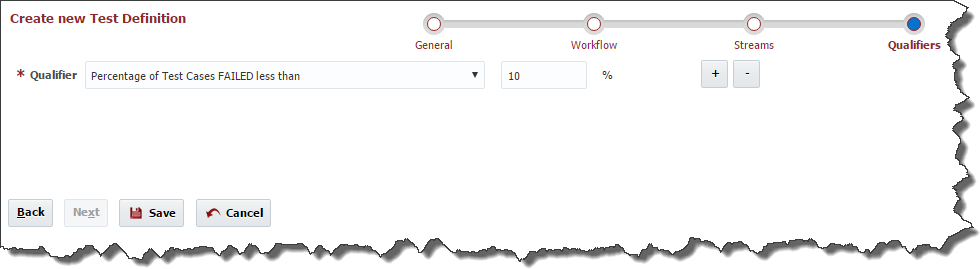

A Test Definition is able to analyze Test Results and come up with the conclusion whether the test run succeeded or failed. This feature is based on Qualifiers. A test definition can contain a number of qualifiers and if all qualifiers, defined at the test definition, return true, then the test definition run is considered successful. If Qualifier is not setup, Test Definition result would be considered as Success.

Click the Next button in order to configure Qualifiers for the test definition.

To add a new qualifier to the test definition click plus button . Click minus button in order to remove a qualifier from the test definition.

FlexDeploy comes out-of-the-box with the following predefined test qualifiers:

Qualifier | Argument | Notes |

|---|---|---|

Number of Test Cases PASSED greater than "X" | Integer value | |

Number of Test Cases FAILED less than "X" | Integer value | |

Percentage of Test Cases PASSED greater than "X" % | Percentage value | |

Percentage of Test Cases FAILED less than "X" % | Percentage value | |

| Average Response Time Less than or equal to | Integer value (milliseconds) | Set value of expected max average response time. This will apply to each test case executed by definition, i.e. average response time must be less than or equal to specified amount. If response time is higher in any test case then test definition will be considered Failed. |

Click the Save button to save the changes to the test definition and return to the list of test definitions defined for the project or click on the Test Definition Name.

Editing a Test Definition

To edit or view a test definition, select an existing test definition and click the Edit button.

The edit screen contains all attributes of the test definition and displays Workflow, Streams and Qualifiers data on separate tabs. Click the Save button to save the changes to the test definition or click the Back button to cancel the changes and return to the list of test definitions defined for the project.

Test Definition Statistics

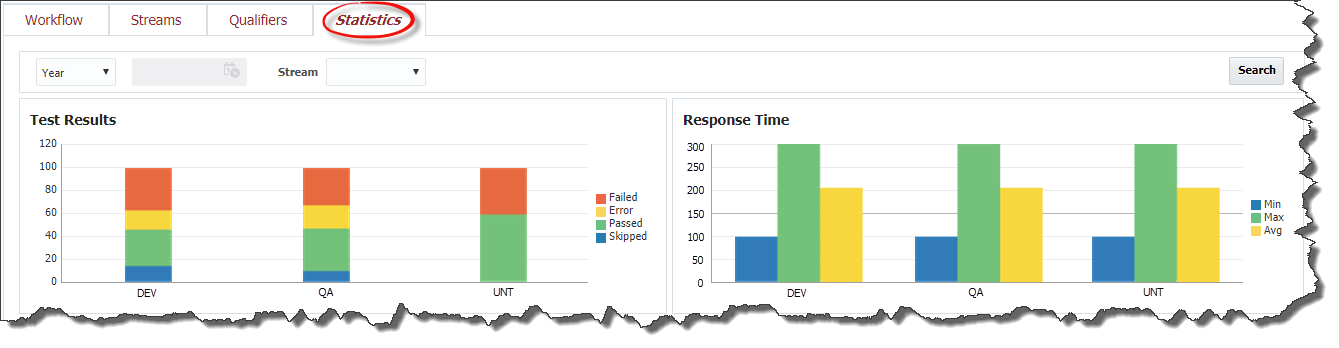

The test definition view screen also contains Statistics tab representing historical information about test definition executions.

There are two charts on the Statistics tab: Test Results and Response Time. The charts represent the same data that is described in the Dashboard section, only related to the Test Definition.

Test Sets

A test set is just a group of test definitions defined for the project. For example for a banking system we could define test sets such as "Loans", "Deposits", "Forex", etc.

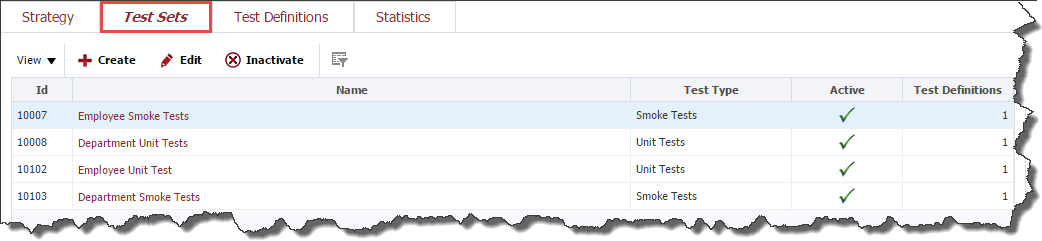

Viewing Test Sets

To view the list of test sets defined for the project, navigate to the Test Automation tab, as it is described in the Project Configuration section and select Test Sets tab.

Creating a Test Set

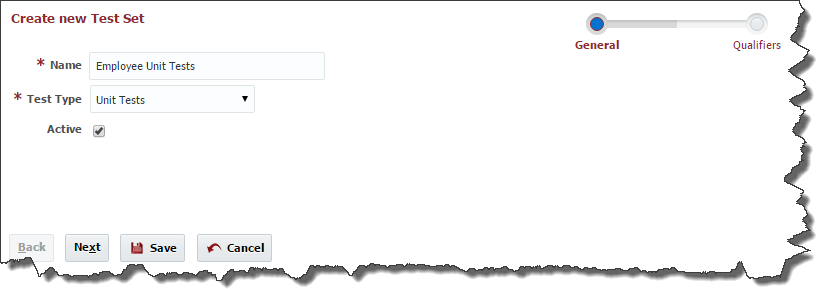

To create a test set click the Create button.

At the General step of the wizard enter values for the following fields:

Required | Description | |

|---|---|---|

Name | Yes | Name for the test set. |

Test Type | Yes | Select Test Type for the test set. The test set contains a bunch of test definitions related to this test type. |

Active | Yes | A flag indicating whether this test set is active. Defaults to "Yes". |

A test set can contain its own qualifiers in order to check whether the entire set of test definitions passed or failed. The same rule as for the test definitions works for the test sets as well. If all test qualifiers, defined at the test set, return true, then the test set run is considered successful.

Click the Next button in order to configure Qualifiers for the test set. Adding and removing test set qualifiers for the test set is performed in the same way as it is done for the test definitions.

For Test Sets FlexDeploy provides the following preconfigured qualifiers:

Argument | |

|---|---|

Number of Test Definitions PASSED greater than "X" | Integer value |

Number of Test Definitions FAILED less than "X" | Integer value |

Percentage of Test Definitions PASSED greater than "X" % | Percentage value |

Percentage of Test Definitions FAILED less than "X" % | Percentage value |

Click the Save button to save the changes to the test set and return to the list of test sets defined for the project.

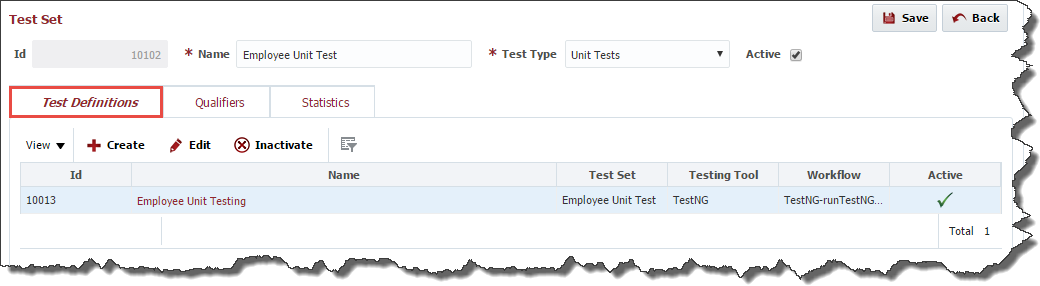

Editing a Test Set

To edit or view a test set, select an existing test set and click the Edit button or click on the Name to use the hyperlink.

The edit screen contains all attributes of the test set and displays Test Definitions, Qualifiers and Statistics data on separate tabs. The Test Definitions tab shows all test definitions related to this test set. The tab allows you to create and edit test definitions in the same way as it is described in the Test Definitions section. If Qualifier is not setup, Test Set result would be considered as Success.

Click the Save button to save the changes to the test set or click the Back button to cancel the changes and return to the list of test sets defined for the project.

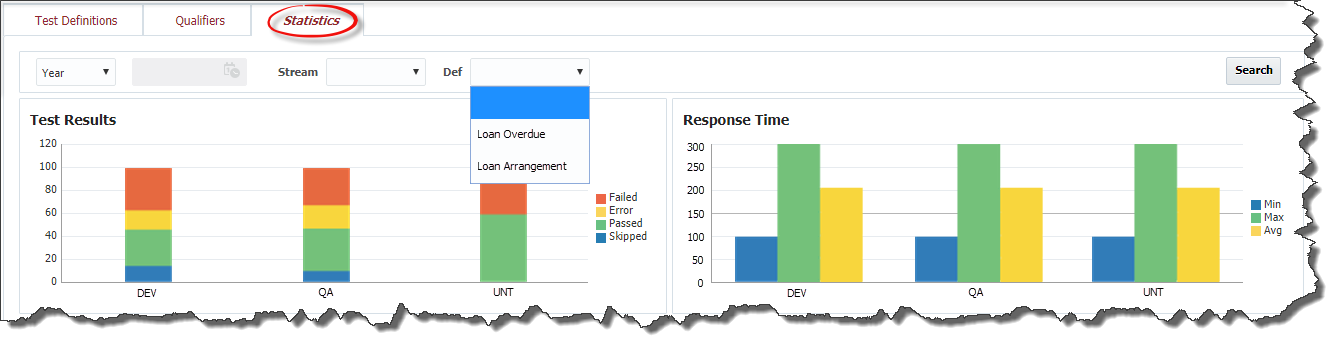

Test Set Statistics

The test set view screen contains Statistics tab representing historical information about test set executions. The tab contains Test Results and Response Time charts representing the same information that is described in Dashboard section, only related to this test set.

- style